A Bleeping Computer article in early January broke the sobering news that a developer and NPM repository maintainer had intentionally sabotaged two popular Node.js libraries by committing intentionally corrupted code. The bad commits were merged and propagated to any production application that used them via automatic build pipelines. Application developers who had included the libraries awoke to find their apps jumbled and broken.

With supply chain attacks at the forefront of security discussions this year, this story is another bellwether of the potential damage to be done by code included in software products written by third parties. Implicit trust of upstream code integrity combined with automation in builds is proving to be an operational vulnerability of its own. After the Log4j debacle, the White House is evening showing concern that use of open source software could be a national security issue.

Let’s take a look at some of the ways developers, devOps engineers, and product managers can mitigate risk and protect their applications from being tampered with by upstream dependencies.

Sabotaged…What?

Node Package Manager (NPM) is a convenient way to manage dependencies for Node.js applications. Pick and choose the libraries you need based on functionality, and everything automatically installs with the right dependencies to run. It’s very similar to any of the other popular package management systems like Homebrew, Bundler for Ruby, Composer for PHP, or even the ubiquitous apt-get for Linux. For Node.js applications that utilize a scripted build pipeline, NPM is used during every build to grab the freshest versions of libraries.

Colors.js is a Node.js library that provides functions for assigning colors to text in the debugging console. The mundane but utilitarian library is a common inclusion in projects with more than 20 million weekly downloads. Faker.js is a library that aids an application under development by populating it with fake data and has roughly 2.8 million weekly downloads. Colors has 19,000 dependent packages while Faker has 2,500.

The developer behind both libraries, Marak Squires, intentionally introduced bugs to the code of both repositories that caused them to break or print gibberish characters. Apps that had been rebuilt since his commits were pushed out via autoupdates, at which point they broke for end users.

The problem is systemic and widespread

Heartbleed, SolarWinds, Log4j, and this latest story about the sabotage commits—these high-profile supply chain vulnerabilities all illustrate an institutional software development issue.

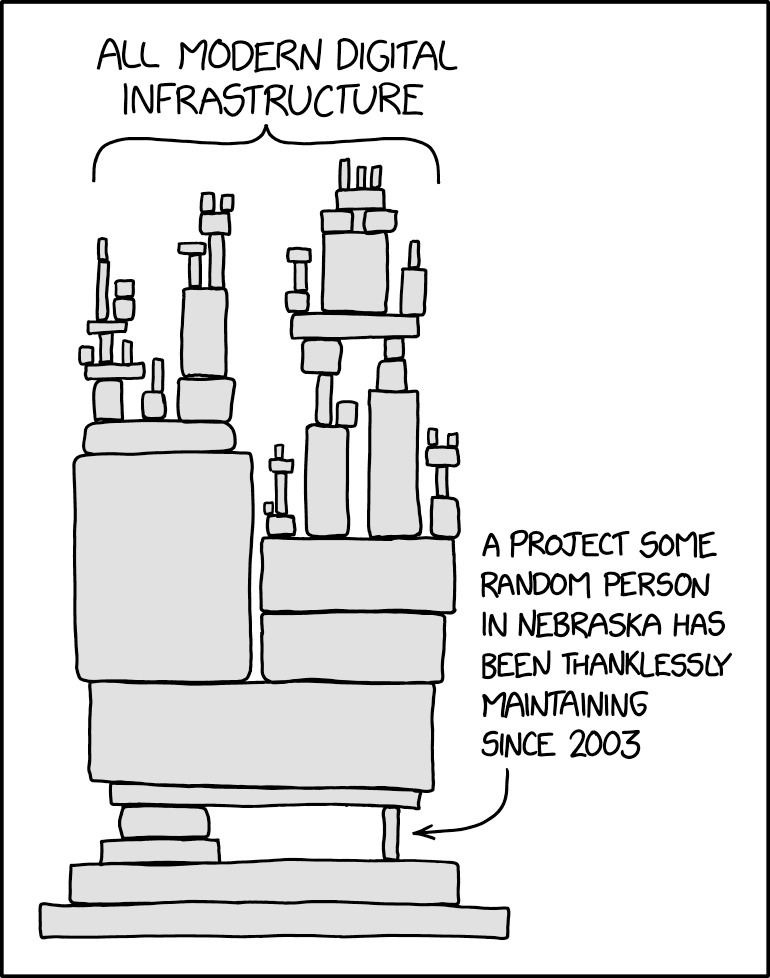

While the world of open-source libraries used as building blocks to allow more rapid development is theoretically great, one unavoidable side effect is that the number of contributors to a final product effectively cascade into the hundreds or thousands, as illustrated in “A More Secure Software Supply Chain.” Each library used could have just one, or ten, or possibly 50 or more contributors, depending on its complexity and popularity. And if that library uses other dependencies, those codebases may have several developers. Or, it may just be one person in Nebraska as this comic from xkcd.com so well illustrated.

Who is contributing code and what exactly that code is though is a squishy area that until now has depended on management structures.

This is how development teams work though, and it’s good. Good code should build on other good code, and good libraries should achieve some level of ubiquity and familiarity in the pursuit of speeding up development and achieving standards. But code is where vulnerabilities and back doors can be introduced, and in order to secure it there must be mechanisms in place.

There is also the idea that the success and ubiquity of a single software component can make it the ultimate achilles’ heel for just about everyone. This is of course in reference to Log4j, the Java logging library used in nearly every Java project. And Java apps are everywhere, especially in enterprise. The list of affected vendor products that utilize Log4j is long, and early in the Log4Shell crisis, people tried to compile and manage just who was affected. As an attack vector, it makes for a very large target purely on ubiquity.

How exactly does bad code get distributed?

A code repository being poisoned with bad commits is not necessarily the problem. Version control software such as the de facto Git exists to isolate or merge individual contributions. It can be used to reject bad commits or revert to unbroken versions should a bug be inadvertently introduced.

The problem lies partly with the modern push to automate. DevOps is a discipline concerned with systematically and reliably assembling code and dependencies, building them into a runtime version, and deploying that build to infrastructure. Something that takes 20 steps manually can be scripted to automatically happen with one click or on a scheduled interval.

If bad code is introduced without any oversight, whether human or automated, it will likely propagate all the way through to build and deployment. In the case of the SolarWinds supply chain attack, bad actors had compromised an upstream vendor with malicious code. That was built in the devOps process by SolarWinds and pushed out by their automated update system to the actual final target: their customers.

What should development groups be doing?

Development groups have to tackle this problem at an operational level, which is to say the people writing code and the people deploying it should be “Shifting Left.” The concept of Shift Left security is the popular initiative for making security in software development more foundational, integrated from the start of a project rather than a concern that’s only addressed in a final audit. It promotes the idea that software architecture should consider security as a core priority rather than an overlay.

Here’s a few ideas to start securing development projects:

1 Manage code contributions

How to prevent the introduction of flawed, invalid, or malicious code? In the art world there is the concept of provenance—a record of all owners of a piece of art that is bought and sold. This record insures preservation of the authenticity of the piece. In the software development world, provenance has a similar function. It is used to insure the integrity of code contributions to a codebase.

This provenance can be achieved to different degrees. Google’s Open Source Security Team proposed a multi-tier framework dubbed SLSA (pronounced “salsa”), or Supply chain Levels for Software Artifacts. The framework defines practices and techniques across a range of maturity levels for achieving a methodology of preventing flaws and invalid contributions to a codebase. It defines a specification for validation of the source, builds, and common components of the development process.

To bring this back around to the intentionally corrupted code commits of the Colors and Faker libraries—the perpetrator was in an administrative/maintainer role. There’s not a whole lot that could be done to prevent his actions if builds are pulling the latest package versions. One method for exerting a little more control over dependencies is by using a technique called dependency pinning, where known dependency versions are set to a particular known good version number versus always grabbing the latest.

The trick to this is that security updates won’t be automatically deployed, so a little more manual effort is required. There may also be a varying level of trust depending on the dependency source, so it’s possible for some sources to use pinning and some to use latest.

A protocol of staging and testing builds when updating to newer dependency versions can then be used to prevent uncontrolled contribution from tanking an automated push to production.

2 Vulnerability scanning in devOps

A large part of shifting left involves incorporating scanning as a part of devOps. The scale and complexity of large dev projects limits actual human auditing, so to catch imported vulnerabilities from upstream libraries, vulnerability scanning tools can be used on repositories as a preliminary step in the build for deployment.

3 Tool up to enable visibility

One of the things that turned the Log4j vulnerability in December from a routine patch scenario into a full-blown crisis was the inability of organizations to determine if and where Log4j existed in their inventory. Many high-profile vendors with well organized development teams were able to patch their products to the latest versions quickly and deploy safe versions. Some are still working on it.

Building a means to gain visibility into versions of dependencies used in products is critical to making quick determinations. Managing a software bill of materials (BOM) is the best way to to achieve it. It is quickly becoming standard operating procedure for customers to request a BOM from a vendor in order to perform their own vendor risk management, which also includes licensing audits.

That very BOM will be especially useful in the event of a critical vulnerability that’s discovered. In a perfect world, the development team can quickly respond to upstream patches, get their own patches published, and issue a security advisory to its customers. This is preferable to better-briefed customers calling to ask where the security update is.

What should security teams be doing?

IT administrators and CISOs should be doing mostly the same thing developers are doing, short of compiling and building the code. They should be working to increase visibility to avoid deploying bad or vulnerable versions.

From a code consumer’s point of view, there are 4 main steps in taking control of your software supply chain:

- Achieve visibility in software inventory—be able to determine if/where and version

- Request or demand BOMs from vendors

- Monitor updates and patching from vendor information sources

- Build a governance program around visibility and monitoring of software supply chain

Bugs will never cease to plague software, but not all bugs are security issues. Security vulnerabilities are a result of bugs that allow an exploit and some type of compromise to occur. A modern approach to dealing with vulnerabilities is to get organized, enable your teams to identify problems quickly, and have processes in place to execute fixes and updates rapidly.